|

|

Post by Admin on Nov 2, 2017 19:03:07 GMT

Watch: Facebook, Twitter & Google to testify in House Russia hearings The old saying about a lie traveling halfway around the world while the truth is still putting on its shoes has never seemed more relevant than in this new era of propaganda-pushing Twitter trolls and fake Facebook users.  But here’s a truth that we hope sinks in quickly in Silicon Valley: Without major changes to the way business is done at Facebook, Twitter and Google, the regulations that these California companies have spent years trying to avoid could become a reality. |

|

|

|

Post by Admin on Nov 4, 2017 19:13:19 GMT

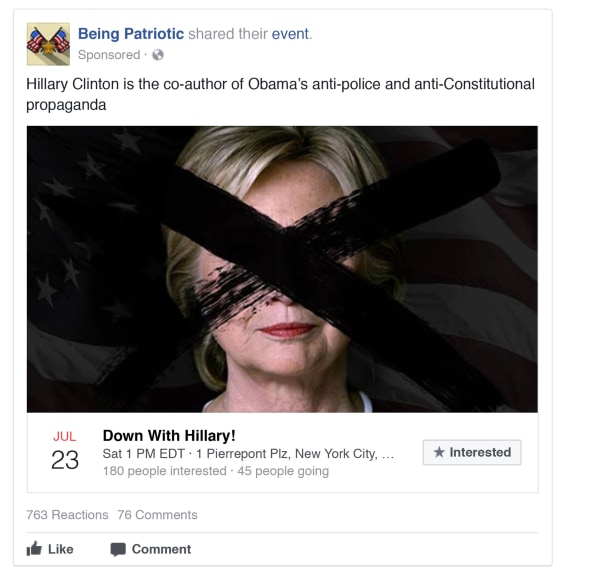

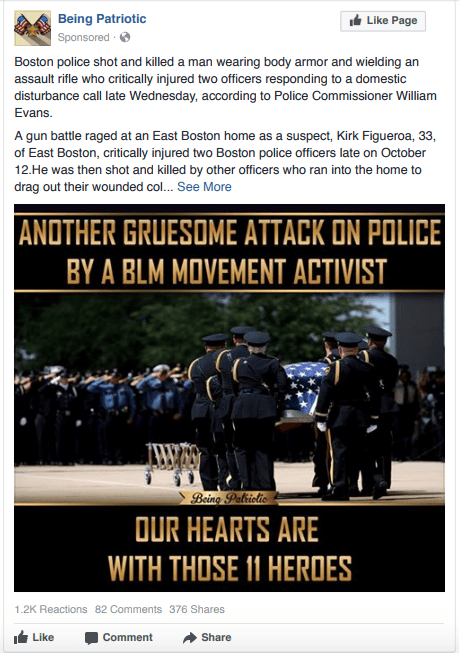

Have you seen one of these posts in your Facebook or Instagram feed? If so, you are among the roughly 150 million people duped by content placed on the social network by the Russian troll farm known as the Internet Research Agency before and after last year’s U.S. presidential election.  These advertisements—among a cache of more than 3,000 ads and 80,000 organic posts identified by Facebook—were released today at the conclusion of a two-day Congressional Hearing, in the first official curtain-raiser on the kinds of disinformation that spread on the platform before and after last year’s elections.  Facebook began to disclose the ads to Congress in early October, but had previously said it would not release them publicly. However, a number of the posts have already been identified and published in the media, thanks in part to cached data.  |

|

|

|

Post by Admin on Mar 19, 2018 18:44:57 GMT

Protecting people’s information is at the heart of everything we do, and we require the same from people who operate apps on Facebook. In 2015, we learned that a psychology professor at the University of Cambridge named Dr. Aleksandr Kogan lied to us and violated our Platform Policies by passing data from an app that was using Facebook Login to SCL/Cambridge Analytica, a firm that does political, government and military work around the globe. He also passed that data to Christopher Wylie of Eunoia Technologies, Inc. Like all app developers, Kogan requested and gained access to information from people after they chose to download his app. His app, “thisisyourdigitallife,” offered a personality prediction, and billed itself on Facebook as “a research app used by psychologists.” Approximately 270,000 people downloaded the app. In so doing, they gave their consent for Kogan to access information such as the city they set on their profile, or content they had liked, as well as more limited information about friends who had their privacy settings set to allow it.  Although Kogan gained access to this information in a legitimate way and through the proper channels that governed all developers on Facebook at that time, he did not subsequently abide by our rules. By passing information on to a third party, including SCL/Cambridge Analytica and Christopher Wylie of Eunoia Technologies, he violated our platform policies. When we learned of this violation in 2015, we removed his app from Facebook and demanded certifications from Kogan and all parties he had given data to that the information had been destroyed. Cambridge Analytica, Kogan and Wylie all certified to us that they destroyed the data. |

|

|

|

Post by Admin on Mar 20, 2018 18:21:38 GMT

Facebook's shares have fallen sharply, wiping $37bn off the firm's value, as it faces questions from US and UK politicians about its privacy rules.

The social network is under fire after reports on how Cambridge Analytica, which some believe helped Donald Trump win the US election, acquired and used Facebook's customer information.

Information Commissioner Elizabeth Denham says it will be used to look at the databases and servers used by British data analytics firm Cambridge Analytica.

Facebook shares ended trading 6.7% lower at $172.56, wiping almost $37bn off the social network's market value.

|

|

|

|

Post by Admin on Mar 21, 2018 19:00:40 GMT

Working with a whistleblower who helped set up Cambridge Analytica, the Observer and Guardian have seen documents and gathered eyewitness reports that lift the lid on the data analytics firm that helped Donald Trump to victory. The company is currently being investigated on both sides of the Atlantic.  It is a key subject in two inquiries in the UK - by the Electoral Commission, into the firm's possible role in the EU referendum and the Information Commissioner's Office, into data analytics for political purposes - and one in the US, as part of special counsel Robert Mueller's probe into Trump-Russia collusion. |

|